AMD Announces New Version of Radeon Open Compute

In a new press release where AMD has boasted about their new accomplishment and has also announced new ROCm 3.0 which their open source compute library. Just like Nvidia has CUDA. ROCm can be used with any GPU.

What is ROCm?

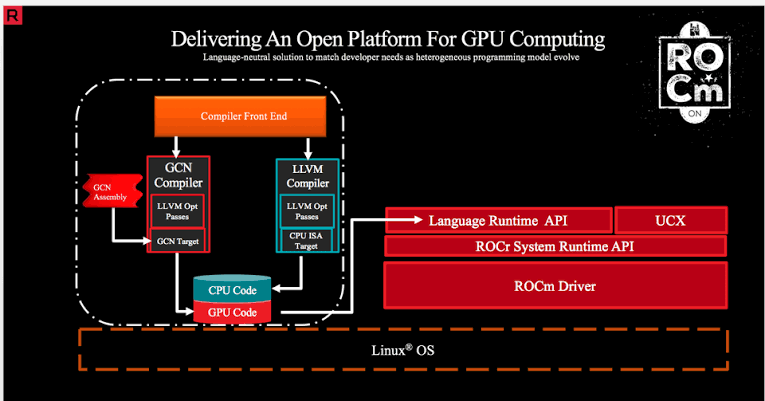

ROCm is a universal platform for GPU-accelerated computing. This lets any hardware vendor build drivers that support the ROCm stack. ROCm supports multiple programming languages. It even provides tools for porting vendor-specific CUDA code.

The ROCm development cycle features monthly releases offering developers a regular cadence of continuous improvements and updates to compilers, libraries, profilers, debuggers and system management tools. Major development milestones featured at SC19 include:

- Introduction of ROCm 3.0 with new innovations to support HIP-clang – a compiler built upon LLVM, improved CUDA conversion capability with hipify-clang, library optimizations for both HPC and ML.

- ROCm upstream integration into leading TensorFlow and PyTorch machine learning frameworks for applications like reinforcement learning, autonomous driving, and image and video detection.

- Expanded acceleration support for HPC programing models and applications like OpenMP programing, LAMMPS, and NAMD.

- New support for system and workload deployment tools like Kubernetes, Singularity, SLURM, TAU and others.

- Blender 3D Jumps to Version 3.1 with Massive Changes Baked In - March 10, 2022

- Open Source Painting and Illustration App Krita 5.0.0 Released- Faster with Massive Feature Updates - December 23, 2021

- Unity Completes Acquisition of Weta Digital- $1.625 Billion spent well - December 6, 2021